Contract Testing with Pact in .NET Core

When working in a microservice architecture it can be hard to verify the whole system end to end due to all the moving parts involved. Often the purported solution to this is to write integration tests which verify a couple bits of the system at the same time with the test mocked out. If all these subsections of the system pass their respective integration tests we can be confident in the system, right?

The Problem with Integration Tests

Integration tests are a good way of verifying our system as they use real (not mocked out) components but quite a lot can go wrong. Integration tests are:

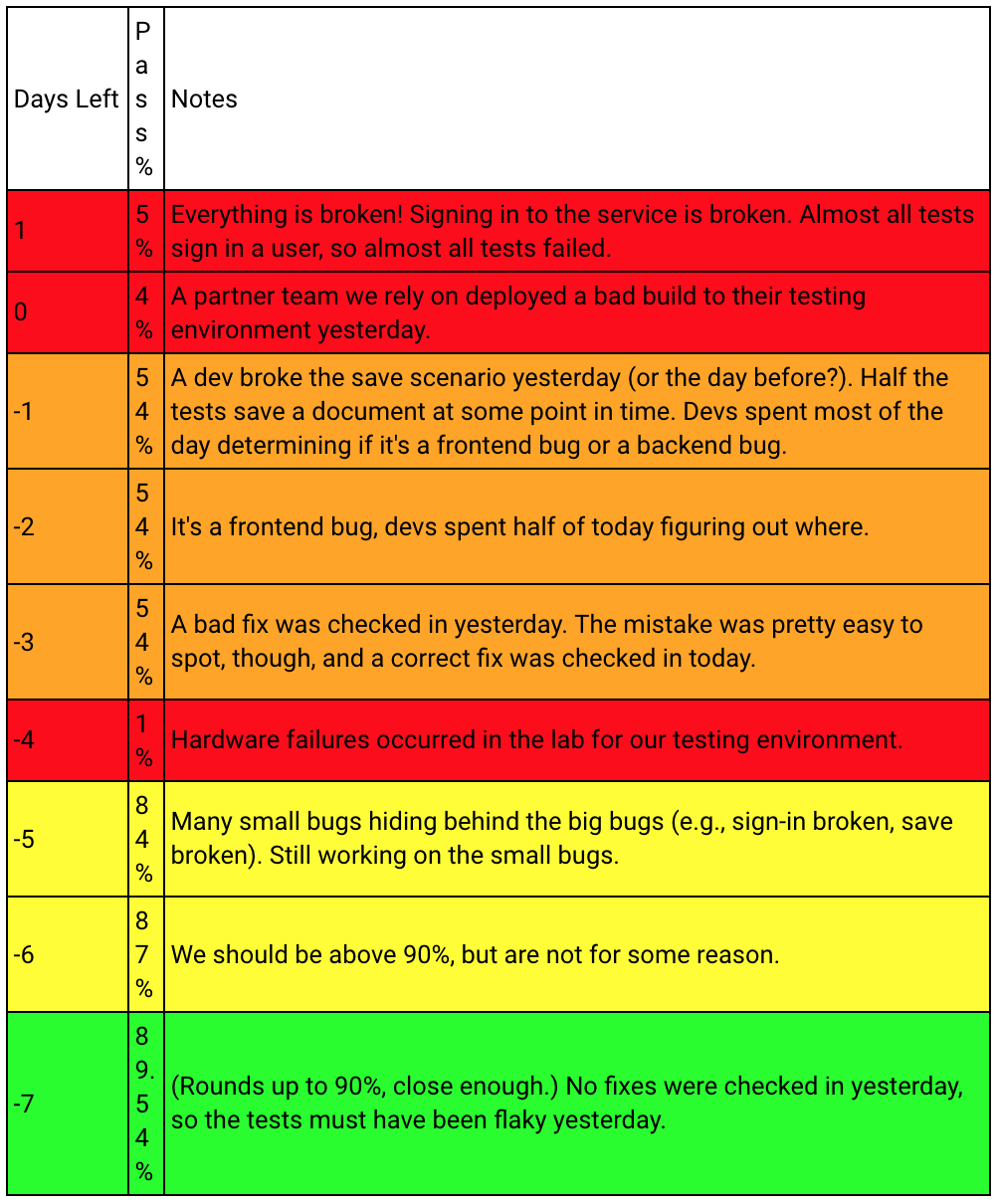

Unstable

Much like the end to end tests, integration tests take a lot of effort to keep up to date and are often flakey and unstable. And unstable tests are worse than no tests as they train your team to ignore test results.

Broadly Scoped & Unspecific

Think about when you are writing some code - one of the first design practices you might have learnt is to keep scope tight. For example, if the code in a loop needs the variable then declare it in there so the code outside of the loop cannot access that variable. That way if the code has a bug in the looping section you know that variable can only have been modified by code within the loop.

A tight scope should also apply to your tests. When your tests fail you should be able to quickly pinpoint where the failure happened quickly and start to understand why. This is why developers and QAs love unit tests as they are specific and tightly scoped when unit tests fail you typically know exactly why and how to go about developing a fix. This isn’t the case with integration tests when they fail it could be a whole host of things! Perhaps it is the first service, maybe the third? What about datastores did we mock them out? Were those mocks correct?

Speed

Integration tests are just slow. It is slow to spin up the subset of your system under test. It is slow to run the tests against the system under test. If you want speed in your test suite the integration tests won’t help.

Expensive

All that time trying to keep your integration tests up to date and fast comes at a cost. Developers or QAs will need to spend quite a lot of time keeping the tests green - in the same way, they keep the end to end tests green.

An Alternative: Contract Testing

Instead of creating unstable, broadly scoped, slow and expensive integration tests there is an alternative, contract testing. Contract testing works in a different way to integration testing. Instead of testing real interactions of a subset of the system contract tests generally work by thinking of a data provider and a data consumer:

- The Providing API has a suite of unit tests referred to as contract tests which test that for different API calls to itself the API returns the expected data.

- The Consuming APIs don’t have integration tests with the Providing API instead they trust(!) that the Providing API will return the data as they expect it. That they will honour the contract they have with the consumer API.

- As long as the Providing API keeps there contract tests up to date and _communicates _with the teams looking after the Consuming APIs everything will be fine.

Nice But Unrealistic

The three steps above are idealistic. Even with the best intentions providing API teams will struggle to keep consuming API teams up to date. What is needed is a way for the teams to keep themselves up to date as much as possible using inter-team interaction to talk about changes they discover themselves.

More Realistic Contract Testing: Pact.io

Instead of relying on teams to keep each other up to date what if the computer did most of the work. This is the problem that the Pact.io testing framework solves which makes contract testing more realistic. Pact follows an updated flow:

- The Consuming API has a suite of component tests referred to as _pact tests _which use Pact for mocking out calls to the Providing API. Once the test run is completed a PactFile is created. This is a JSON record of all the mocked out requests and responses the Consuming API made to the Provider API.

- The Providing API has a suite of unit tests which consume this PactFile generated by the Consuming API and replay the requests against itself and check the responses match the actual responses from the Providing API.

- If the Providing API’s pact tests fail that teams build goes red and then they can speak to the Consuming API team to see what mocks they are using and if they are correct or not.

Now communication is driven by failing tests and it is the Consuming API team which are driving the tests for the Providing API team. This approach makes it much more likely that the Providing API team will speak to the Consuming API team as without speaking to them about changes and updates for there testing the Providing API pact tests will fail and there build will break.

Pact Test Implementation with .NET Core

Pact has been implemented in many languages including .NET Core by the Pact-Net project on Github! So you can start to write pact tests on your .NET Core projects. To get started you can check their examples and if you are ready to check out my Workshop on Github.